AI-driven errors in cloud infrastructure create vulnerabilities that adversaries could exploit—putting large portions of the internet at risk without stronger safeguards and oversight.

Recently, Amazon’s Web Services (AWS) suffered an outage for over 12 hours.

It’s hard to overstate the importance of AWS to the internet. AWS servers host millions of websites, and dominate the cloud industry, controlling roughly 30 percent of it, a plurality. If AWS were to somehow go down for an even more significant period of time—or was to go down permanently—it could effectively take a third of the internet with it.

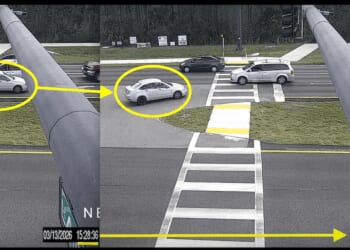

But earlier this year, that was precisely what happened: AWS went down for roughly 13 hours. That it went down is not particularly shocking; even well-oiled machines run into problems, and servers are no different. The concern was how it reportedly went down: due to an artificial intelligence (AI) driven error, with the Guardian claiming that there were two outages related to AI. The Financial Times reported that Amazon held an emergency meeting over the issue.

Amazon was quick to put out a statement addressing the claims, but the statement was something short of a denial. They did admit that “only one of the recent incidents involved AI tools,” but blamed it on the engineer, not the AI, writing that “our systems allowed an engineering team user error” to have a broad impact. Other reporting indicated that this was due to the AI using an outdated internal wiki to wrongly inform an engineer. They also denied that AWS was affected by AI, although numerous news organizations reported otherwise.

There are several concerns with Amazon’s statement, including the fact that they would be willing to throw their personnel under the bus. Also, concerningly vague is the term “systems”—does Amazon mean AI systems, or something else? But both of these are issues for Amazon and its shareholders.

AI-Driven Cloud Infrastructure Risks Expose Vulnerabilities in AWS and the Internet

Policymakers should be concerned with something else: that Amazon essentially admitted, between the lines, that yes, AI was responsible for the issues. And even if the reporting about AWS outages is not accurate (to believe that, one would need to take Amazon’s word), it seems only a matter of time before AI gives an engineer outdated information about something AWS-related.

Essentially, one-third of the entire internet could theoretically go down at any time because of poor use of AI.

Foreign Adversaries Could Exploit AI Systems to Disrupt Critical Internet Infrastructure

The problem gets worse, however, when one considers how bad actors could take advantage of this situation. Foreign governments being able to manipulate AI has been a concern for the past few years, with discussions ranging from teaching AI to think incorrectly—such as feeding it false information about how a military trains, thereby “teaching” it to think falsely—or by simply hacking it outright.

But taking down the internet would be even easier than that. The situation could go like this: a foreign intelligence service quietly changes Amazon’s internal wiki, the information used by Amazon’s AI. The AI then uses that now purposefully corrupted wiki to give false information to a bevy of coding engineers, immediately causing a significant part of the internet to entirely stop working.

This does not require months or years of effort, as training an AI might. Nor does it require an outright hack, which may be detectable. It simply requires a relatively easy edit of a likely low-security internal wiki.

AI Hallucinations and Unreliable Outputs

We currently treat AI as something like a bicycle. If you pedal, it goes forward. If you hit the brake, it stops. “AI is a tool” has been argued for years—but it’s not true. If you hit the brakes on a bike, it won’t make you go faster. If you pedal forward, it won’t go backward. A tool offers no surprises. Hitting a nail with a hammer will drive the nail into wood. The hammer will not spontaneously combust, set on fire, or suddenly magnetize to the nail and pull the nail up.

AI does unexpected things because it does not really “know” anything. It is only as good as the information that goes into it, and even then, it frequently makes mistakes due to how it constructs information. Anyone who has used AI likely has some sort of story about it being flatly wrong. Even innocuous uses, such as a faster search methodology, often come up short. In a recent test, I asked ChatGPT to find a letter written by Andrew Jackson (I had a portion of the letter in a physical book in front of me). Not only did it fail to do so, but it also informed me that the author of the book—one of the most respected Jacksonian scholars ever—frequently made up sources and letters. This was, of course, untrue, but had I known less about the subject, I would have come away believing the pre-eminent Jackson scholar was a fraud.

And this is the issue with viewing AI like a tool: you trust that a tool will do what it was built for. You trust a bicycle to go when pedaled, and Amazon’s coding engineers clearly trusted AI to read the internal wiki correctly.

But because it will not work like a tool, policymakers are essentially depending on AI not to make mistakes in order to keep the internet afloat. Yes, humans are making the final decisions (at least, in the present), but those decisions are based on faulty and sometimes entirely hallucinated “help” from AI.

US Policymakers Must Regulate AI Use in Cloud Computing and Critical Infrastructure

There are a few directions Washington could take to address this technological time bomb. They could ban AI from being used or consulted by coding engineers who work on certain projects. AWS and the internet worked before AI; there is no inherent need to involve it now. They could also mandate that any information AI relies upon be regularly updated or be more strongly protected from external bad actors.

Either of the above suggestions will likely receive serious pushback from AI companies. This is to be expected: auto manufacturers resisted the introduction of seatbelts. But resistance from Big Tech should not stop Washington. After all, the internet itself might be at stake.

About the Author: Anthony J. Constantini

Anthony J. Constantini is a policy analyst at the Bull Moose Project. His work has appeared in a variety of domestic and international publications.